The first sign language AI is here Circuit Diagram The deaf and mute population has difficulty conveying their thoughts and ideas to others. Sign language is their most expressive mode of communication, but the general public is callow of sign language; therefore, the mute and deaf have difficulty communicating with others. A system that can correctly translate sign language motions to speech and vice versa in real time is required to overcome Sign Language Recognition has grown to be increasingly important as a mean to improve the access and effective communication for the hearing impaired through Human Computer Interaction (HCI). This paper presents a hybrid approach, unifying deep learning with graph theory, that effectively recognizes sign language gestures in terms of recent state-of-the-art advances in artificial intelligence Sign language is the only way for deaf and mute people to represent their needs and feelings. Most of non-deaf-mute people do not understand sign language, which leads to many difficulties for deaf-mutes' communication in their social life. Sign language interpretation systems and applications get a lot of attention in the recent years. In this paper, we review sign language recognition and

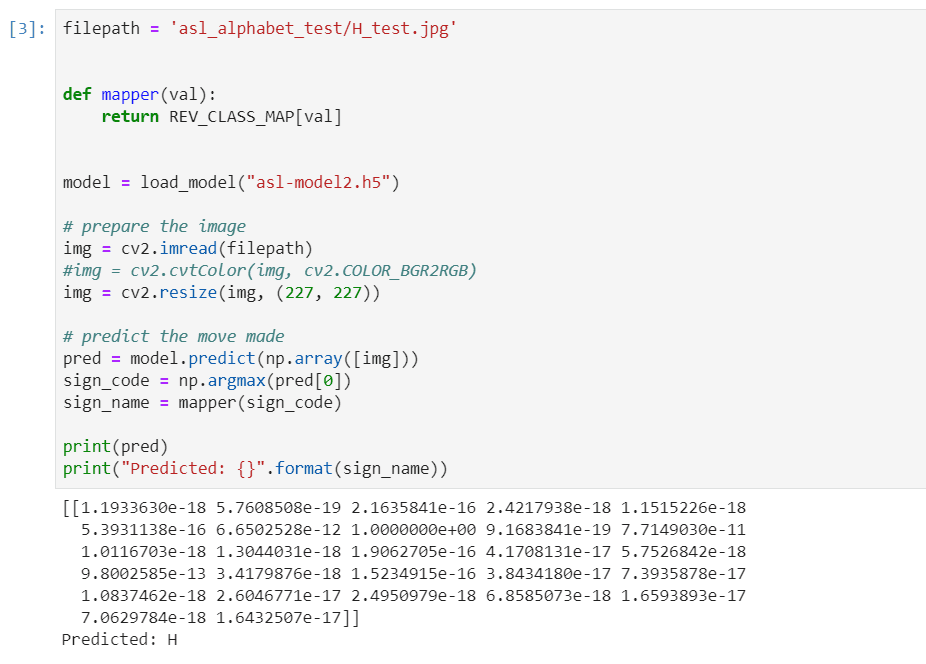

The goal of this deep learning project is to create a model for sign language recognition using a convolutional neural network (CNN), utilising the Keras package and OpenCV for live picture capture. The AI-powered sign language recognition project aims to develop, implement, and evaluate an advanced system designed to accurately interpret and translate sign language gestures into text or speech. This project involves the selection and analysis of a diverse range of sign language gestures, focusing on various contexts and applications.

Sign language interpretation using machine learning and artificial ... Circuit Diagram

Project focused on the detection and interpretation of American Sign Language (ASL) using deep learning and computer vision techniques. The research integrates MediaPipe, a framework developed by Google for hand tracking, with LSTM (Long Short-Term Memory) neural networks, to create a system capable of recognizing sign language gestures. "By improving American Sign Language recognition, this work contributes to creating tools that can enhance communication for the deaf and hard-of-hearing community," says Dr. Stella Batalama, Dean of the FAU College of Engineering and Computer Science.. Dr. Batalama emphasizes that this technology could make daily interactions in education, healthcare, and social settings more seamless and SLR technology uses AI-driven algorithms to interpret gestures, body movements, and facial expressions into spoken or written language. This process requires complex machine learning models trained on thousands of hours of sign language data. These systems rely heavily on deep learning frameworks to understand subtle nuances, like finger placement or hand motion speed.